This wear could be considered "common cause variation" as would the settling of the components with temperature and gas flow controls. The variation was due to set up and wear of the components over time, as plasma is hot and beam currents act like particle guns in sci-fi movies. The process would drift in normal operation, as operators (or the machine) had to tune the beam for extraction and often managed to get slightly different optimum "beam currents". The implanter is basically a mass spectrometer on steroids. Precision machining, high voltages and powerful magnets enabled the charged particles to be drawn out of the plasma and then be magnetically steered through a fine graphite slot, selecting one particular element from the original gas. They turn gases into plasma - a glowing cloud of charged particles. I explained that I had worked with ion implanters. However, it was believed that working to 6 Sigma standards would ensure that tolerances between steps would not be an issue. Sometimes in processes with tolerances on each step, the tolerances can add up and lead to problems. We discussed the original logic was for serial process steps. This time I explained in more detail that I suspect it would have been Motorola that defined the offset as they developed the original system. How accurate or valid the answer is I cannot confirm.Īshish came back to me after my first reply. It did agree with my value of the probability of landing above Z=6. I found a "calculator" that might be correct but I cannot confirm the accuracy. I even did a web search on the 1.5 Sigma offset and only found a few posts that explained it reasonably well. In either case, we would quickly see the daily setup variation on both sides of the mean they created - but this would have a zero long term component (starting value) from which the long term "deterioration" begins.īefore replying, though, I spent a while trying to find a chart that gives z-scores as high as 6 and failed - miserably. In the examples I have given below, the 1.5 sigma (long term) would start with a "new" part (for example, a filament with a specific resistance) or a "clean" vacuum system (that defines the optimum vacuum - it cannot be any better, so any change in the optimum would depend on the efficiency of the vacuum pumps). I explained that I had simply assumed that the value of 1.5 was based on the long term drift they (Motorola) had seen on equipment. He was not too happy with the answer either. My answer in the post was followed up in a chat to Ashish. Between that and some other research I did, the chapter did not turn out as I originally planned. I just accepted that the reasons were valid even though I thought the logic was a bit "iffy".

(If we consider the area above and below +/- 6 Sigma, we would have a 2 in a billion failure rate but, in reality, the value is not really relevant.)īecause I worked in Semiconductor Manufacturing for a long time, I understood that there were long term drifts: I had seen them and they were often part of a solution to a process problem. Now, if you look further to the right, starting at 6 Sigma, but below the x-axis, we see a blue area that represents the 1 in a billion failure rate. The large "orange" block to the right of the mean and above the x-axis, starting at 4.5 Sigma is the area under the curve that represents 3.4 failures per million (or 3.4 DPMO). If you refer to the figure below, you will see that I extended a standard 3-sigma binomial chart to 6-sigma. When we look at the normal distribution, we would usually assume that the 3.4 fails per million would be evenly split between both the positive and negative (1.7 fails on each side, which would make the z-score around 4.6 to 4.7) that is 1.7 per million above 6 sigma and 1.7 per million below -6 Sigma, but this is not how I understood the explanation. It bothered me that the 4.5 Sigma range starts from a mean value of Zero (50% on the Binomial Distribution). The long-term drift was 1.5 Sigma and the short-term was 4.5 Sigma.īut, to be honest, I never agreed with the logic. So I answered the question from memory, explaining that Motorola had "split" the 6 Sigma values into two parts: a long term and a short term drift. (and we are worried about 6 Sigma!)īut you can see it is a tad less frequent than 3.4 failures in a million. In the UK a billion is a million million, although recently it was reported as 100 million. Unless I missed the memo, this is an American Billion.

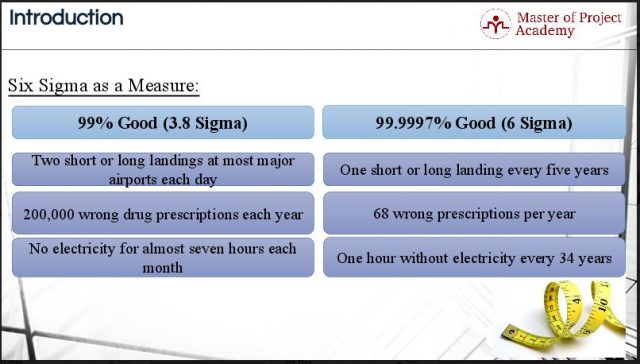

What I found was a much lower rate at 6 Sigma: around 1 in 1,000,000,000.Īs an aside. When it came to explaining the failure rate, no matter what I did, I could not get "6 Sigma" to be 3.4 per million fails. You see, when I wrote my first book, I included a chapter on 6 Sigma. The question was "Why is 'Six Sigma' called '6' sigma and not 4.5 sigma or some other sigma?" Gautam asked a question in a group that I found very interesting because it brought back memories.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed